NOTE : nohup is used to make sure that the program does not hang up when you close the SSH session. You can get filebeat to log the content by specifying a log file name instead of /dev/null I am redirecting the logs to /dev/null as I found that the logs generated by filebeat for each line of log shipped is big and for a high traffic system the logs were getting really huge. In that case make sure that the filepaths are absolute for the filebeat and filebeat.yml files You may also make this as a script and then run from a different location. You need to run this command from the Filebeat installation directory. filebeat -e -c filebeat.yml -d “publish” > /dev/null 2>&1 & Once the configurations are done, we can start Filebeat by running the following command. This need to be the same port we are configuring the logstash in nf file Starting Filebeat The host name is system where the logstash is running and port is the port on which logstash is listening to events from Filebeat. As of this writing, Filebeat is 5.1.1 version and I am the link for Linux 64 bit.Įxtract the files from the archive using the command But the steps are almost similar for other platforms as well.įirst off, download the latest file beat zip or tar.gz file from the Filebeat download page. Please note that I am using a RHEL CentOS based server and the installation instructions would be based on that platform. We will start with the configuration of the Filebeat. First we need to download the latest filebeat application and install in the application servers where the logs are getting generated. We need to configure Logstash also to listen and receive the events from Filebeat. In this post, we will see how we can configure Filebeat to post data to Logstash server. Filebeat connects using the IP address and the socket on which Logstash is listening for the Filebeat events. Filebeat helps you keep the simple things simple by offering a lightweight way to forward and centralize logs and files.īasically, what it means is that ,Filebeat can reside in the application server and then monitor a folder location to send the logs as events to Logstash running in the ELK Server. Not sure if there is another way to handle the processing of some messages to Logstash and some directly to Elastic in a single Filebeat process, but this at least got my data into Elastic so I can move on.Forget using SSH when you have tens, hundreds, or even thousands of servers, virtual machines, and containers generating logs. #ssl.certificate: "/etc/pki/client/cert.pem" # Certificate for SSL client authentication # List of root certificates for HTTPS server verifications

# Authentication credentials - either API key or username/password. # Protocol - either `http` (default) or `https`. # Configure what output to use when sending the data collected by the beat. This allowed me to process my IIS logs directly to Elastic instead of first to Logstash. Next, while there might be other ways around it, what I did was update my Filebeat.yml and set my Output to Elastic.

Subsecond = subsecond.to_f / (10 ** subsecond.length)Įt("elapsed", 3600 * event.get("hours").to_f + 60 * event.get("minutes").to_f + event.get("seconds").to_f + subsecond)

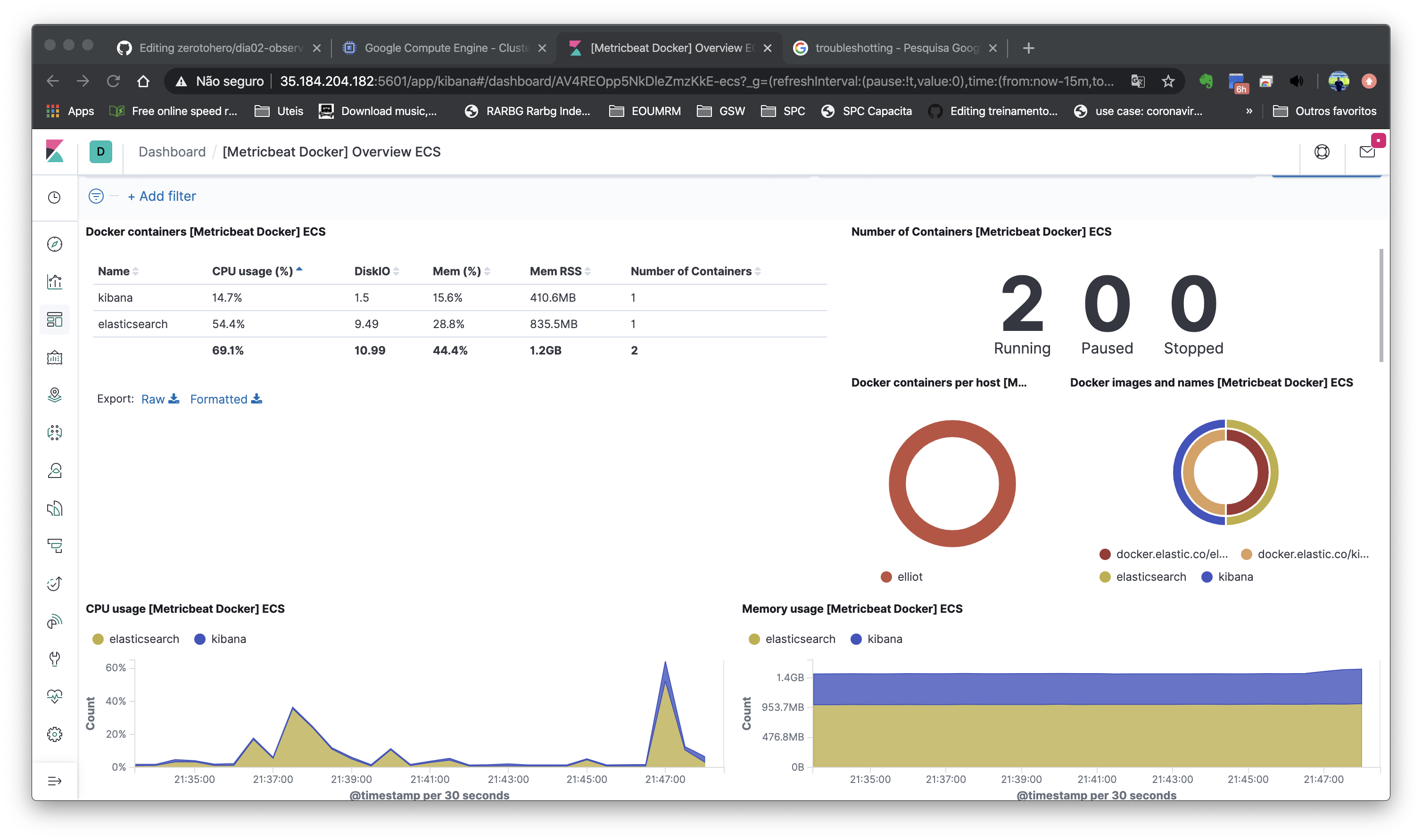

I'm trying to setup the Filebeats IIS module ( link) so I can display IIS logs (version 10) in the canned Kibana Dashboards, however I get errors in Logstash when parsing the messages preventing them from display correctly.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed